Survey Says: Video QC and Monitoring Are Essential for Streaming Quality, QoE, and Customer Retention

In partnership with Interra Systems, leading streaming media industry expert Dan Rayburn conducted a survey in 2022 to better understand the steps media companies are taking to quality control (QC) and monitor their content. The survey collected data from media industry decision makers. Its aim was to gather their thoughts on employing comprehensive QC and monitoring methods at key video processing and delivery points in the media distribution chain, and find out how important this is for optimum quality and efficiency. This white paper digs into the 155 participants’ responses and explores key findings, including why companies are implementing QC and monitoring, the extent to which they’re doing so, and the key challenges they’re facing.

Content Quality and Customer Retention Are Linked

When asked if there is a strong correlation between content quality and customer retention, over 70% of respondents answered in the affirmative. The reason being consumer expectations are exceptionally high. Not only do they expect a variety of compelling content, but also outstanding picture quality and an exceptional delivery experience. And due to numerous industry forums dedicated to picture quality rankings for every OTT streamer, word of a glitch spreads fast. The public regards these forums as highly credible, so a bad review—especially for premium content—can create a negative impression about a streaming service that can sway a consumer’s decision to subscribe. As a result, many OTT providers are making audio and video quality, and the overall viewing experience, high priorities, which means implementing QC and monitoring into their workflows.

Monitoring refers to the performance of a broadcasting or streaming network and provides important information such as uptime, the probability of downtime, error rates, quality issues, latency, etc. Performed in real time, it focuses on the delivery side to ensure an optimal QoE for viewers. QC involves verifying whether a video meets the delivery specifications for where it’s going to be played. A simple QC system looks at the codecs and profiles (bit rates) used to compress video, chroma levels, video artifacts, and more. However, OTT streaming has extended the definition of QC beyond audio and video quality and compliance to include content categorization, captioning, subtitling, and all metadata management. In other words, a simple QC system is no longer enough.

The Need for Comprehensive QC at the Video Processing Stages

For content acquisition and distribution processes involving complicated decoding and transcoding operations—where video and metadata integrity must be preserved—comprehensive QC is required. It helps detect issues such as missing audio, poor video quality, issues with loudness or aspect ratios, missing dubs, and more to help ensure regulatory compliance and conformity to the different delivery specifications established by streaming companies. Furthermore, it can even help fix these errors. Without QC, it’s expensive and time consuming for media companies to determine why their content was rejected and takes even more time and money to address the issue. Quality issues will always be a factor, especially with media companies pushing the limits on compression to save bandwidth. And with today’s ever-increasing volume of content, comprehensive QC has become one of the most important functions of content preparation.

Considering that QC and monitoring are essential to quality assurance, one would expect the majority of respondents to be performing these vital functions. Factors such as complicated streaming workflows, software installation and maintenance costs, and collaboration across the organization itself all drive home their importance and make it difficult for media companies to ignore monitoring or only consider a basic system.

However, when asked if they QC and monitor content at the video processing stages before final delivery, only 21.3% reported doing so for all content. Almost 40% are only doing basic QC and monitoring, while 20% perform these processes manually, and 19% don’t monitor their content at all. Part of the reason for this disconnect is that the need for monitoring can vary by the type of content being delivered, the video compression technology being used, and the resolution. For example, mature compression technologies such as H.264 and MPEG-2 may not require comprehensive QC and monitoring, especially when used with well-known content types and a stable encoding infrastructure.

The Role of Comprehensive Manifest Validation in Delivering High-Quality Video

Preparing video for adaptive bit rate (ABR) delivery is an involved process prone to errors, with each file/VOD asset being transcoded into multiple profiles, at different resolutions, for various user devices and bandwidth limitations. Quality must be checked for every profile to ensure that no degradation has taken place. Furthermore, if the content includes ad insertions, closed captioning, or subtitling, proper checks need to be

performed to ensure that ad start/stop times and captioning are aligned with the video.

This makes manifest validation one of the most important steps in ABR delivery. It looks at the entire bit rate ladder—checking all profiles—and if performed at the origin server, it can check download times and the integrity and quality of ABR delivery. Streaming providers can utilize this to identify quality-related problems that stem from transcoding and packaging. In addition, nearly three quarters of respondents agreed that manifest validation is critical to high-quality video delivery—highlighting the importance of this step.

The Challenge of Root-Cause Analysis and Error Correlation, and the Need for Multipoint Monitoring

When asked if they can perform root-cause analysis in real time, for problems related to poor AV quality experienced by viewers, 27.2% of respondents said no and 37.4% reported being able to perform it “in some cases.” In other words, although participants share the view that monitoring and collecting important metrics at critical demarcation points are required to improve video quality, around a quarter do not implement any root-cause analysis.

A primary reason for this is that workflows are often out of the control of service providers. Once the packaged content is handed off to a third party—which could be a CDN or multi-CDN—or when multiple organizations are involved in content distribution, problems can occur due to the diffusion of responsibility. A clear process and methodology—which requires resources and a coordinated effort to execute—must be in place to collect errors and performance statistics. For this reason, thorough testing of ABR streams earlier in the delivery chain is key for detecting and resolving issues proactively.

In this regard, the survey is a wake-up call for tool providers, who need to do a better job of correlating errors in real time while providing more contextual information to help fix problems as quickly as possible. This requires better collaboration with complementary solutions in the ecosystem, including encoding, monitoring, and device-level QoE to provide a holistic view of the media chain. Streaming companies must then put methodologies in place to harness the relevant data and take actions based on it.

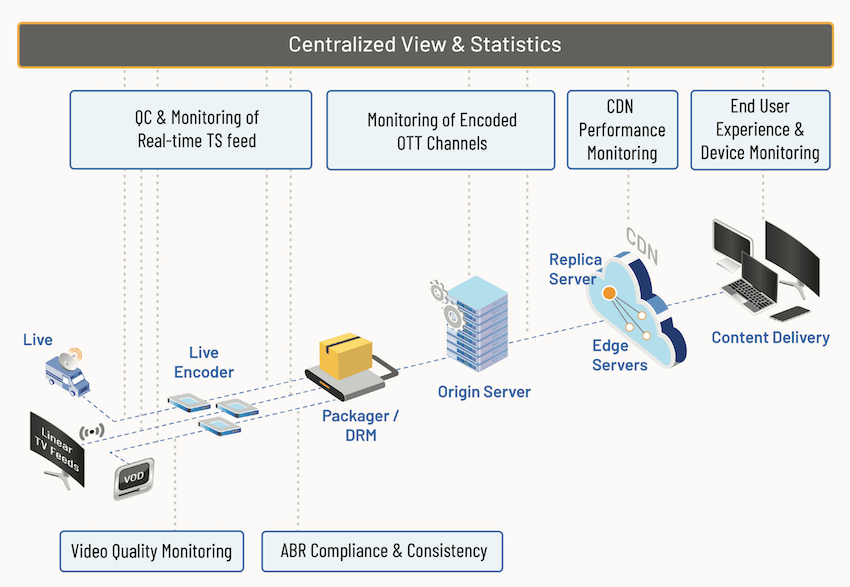

One approach is multipoint monitoring from a single dashboard showing data from various distribution points, origin servers, and CDN edge networks. When asked if such a solution would help ensure consistent and better video quality, 80% of respondents said yes. As workflows move to the cloud, and video delivery networks become centralized, multipoint monitoring with a single dashboard becomes a powerful tool, especially for live events, where real-time quality monitoring is imperative. With access to the status of various demarcation points, media

companies have the power of data and the accompanying intelligence for problem resolution.

Device-Level Monitoring Alone Is Insufficient

Just over 51% of survey respondents agreed or strongly agreed that relying on monitoring at the device level is insufficient in addressing QoE issues. 21.3% somewhat agreed, while 27% disagreed. Video-, audio-, closed-captions-, and packagingrelated errors were identified as the most challenging in terms of quality (video: 44%; audio: 40%; packaging: 31%; and closed captions: 30%). That’s because device-level monitoring focuses on data such as buffering, average bit rate, and play time, but provides no information on the actual audio and video quality as perceived by the viewer. So, if picture quality is very poor—but is delivered without interruption—device-level QoE won’t detect the quality issues. Service providers relying on this approach are also oblivious to AV-sync-related problems or incorrect placement of subtitles.

However, this isn’t to say device-level monitoring isn’t important. It is, but it should be used in conjunction with server-level monitoring at the origin server—the place all content is kept once it’s transcoded and packaged. Validating assets at the origin server for format, compliance, and quality checks is an important element of the monitoring strategy. As the origin server will typically be closer to the content preparation stage, problems found there can be successfully fixed before the content is delivered to the consumer.

Conclusion

While the findings of this survey indicate that delivery quality continues to be of utmost importance for the video streaming industry, they also show that disjointed workflows, limited monitoring, and lack of a coordinated troubleshooting methodology seem to hinder efforts to provide better video quality. For any OTT service provider, quality assurance must include comprehensive QC for VOD, proactive monitoring at the content preparation and delivery stages, and the collection of relevant quality metrics on a continuous basis for optimum video quality and viewing experiences. In the real world, awareness of this need is continuing to increase; at least six major streaming services have been launched in the last two years, with some of them investing billions. And while much of this money is being used for content development, providers are dedicating sizable portions of their budgets to the entire end-to-end ecosystem.

In putting their QC and monitoring strategy in place, many media companies are realizing that one size does not fit all. Monitoring everything, everywhere, all the time might not be necessary for some streamers, who only require it at a couple key points. In addition, some require more agile monitoring that’s scalable and customizable to the type of content they receive. For example, premium sports content or 4K channels require more rigorous monitoring than documentaries or news programs. Providers need to invest time and resources to understand where it makes the most sense to monitor and what metrics to pay attention to. A smart monitoring strategy and continuous assessment of performance metrics can go a long way towards a better quality of experience, enabling customer growth and increasing revenues. Interra Systems’ ORION suite provides a solution for complete quality assurance of new and next-gen media workflows.

For more information about the ORION content monitoring suite, please contact us at info@interrasystems.com.

1601 S. De Anza Boulevard, Suite 212

Cupertino, CA 95014, USA

This article is Sponsored Content

Related Articles

As OTT overtakes traditional TV viewing, media companies can no longer afford to use the status quo as far as QC and monitoring are concerned. Aggressive strategies for delivering a great QoE are required to increase subscribers and boost monetization. Media companies should carefully choose the right strategies and tools to streamline processes for optimum video quality and viewer experience.

19 May 2022

Interra Systems VP Product Management Anupama Anantharaman offers essential recommendations for effective streaming quality and performance monitoring in this clip from her presentation at Streaming Media West 2019.

09 Mar 2020

Interra Systems VP Product Management Anupama Anantharaman definitively answers the question "Why monitor?" for OTT providers in this clip from her presentation at Streaming Media West 2019.

02 Mar 2020

Today's market is too competitive for subpar experiences. If companies aren't monitoring quality of service and quality of experience, they're likely losing viewers—and profits.

31 Jul 2017

Companies and Suppliers Mentioned