Closed Captioning for Streaming Media

Interestingly, McLaughlin also questions whether the use of the SMPTE-TT (Society of Motion Picture and Television Engineers Timed Text format) allowed in the FCC regulations provides the equivalency the statute is seeking for live captioned content. Specifically, McLaughlin noted that SMPTE-TT lacked the ability to smooth scroll during live playback, as TV captions do. Instead, the captions jump up from line to line, which is harder to read. You can avoid this problem by tunneling 608 data with the SMPTE-TT spec, but not all web producers are using this technique.

McLaughlin feels that using the embedded captioning available in MP4 and MPEG-2 transport streams, like iOS devices can, is the simplest approach and provides the best captioning experience. Note that neither the sidecar or smooth scrolling issues present problems for on-demand broadcasts. With on-demand files, the captions are synchronized with the spoken word and predominantly presented via pop-up captions over the video, which are much easier to follow when they match what’s happening on screen.

While this won’t meet the FCC requirements, another option for private organizations seeking to provide a feed for deaf and hard-of-hearing viewers is the New York City Mayor Bloomberg approach of supplying a real-time American Sign Language interpretation of the live feed. This was the approach originally used by Lockheed Martin Corp. for its live events. Ultimately, the company found using real-time captioning to be more effective and less expensive. You can see a presentation on this topic by Thomas Aquilone, enterprise technology programs manager for Lockheed Martin, from Streaming Media East.

Captioning On-Demand Files

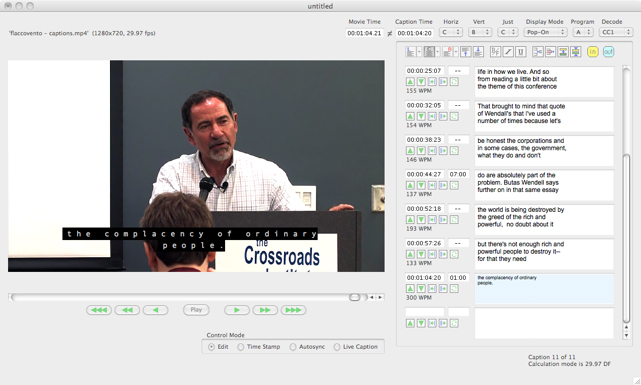

As you would suspect, captioning on-demand files is simpler and cheaper than captioning live events. There are many service providers such as CaptionMax, where you can upload your video files (or low resolution proxies) and download caption files in any and all required formats. You can also buy software such as MacCaption from CPC Computer Prompting & Captioning Co. to create and synchronize your captions (Figure 4).

Figure 4. Using MacCaption to create captions for this short video clip

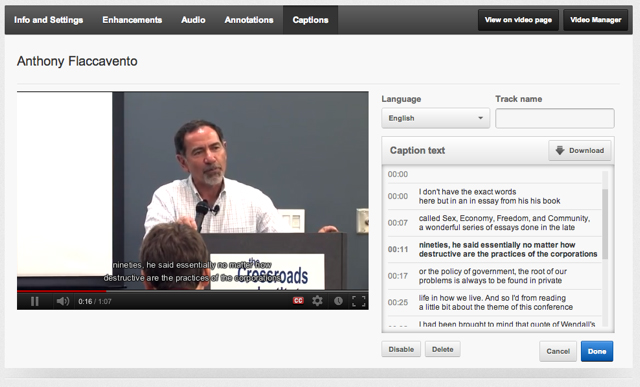

For low-volume producers, there are several freebie guerilla approaches that you can use to create and format captions. For a 64-second test clip, I used the speech-to-text feature in Adobe Creative Suite to create a rough transcript, which I then cleaned up in a text editor. Next, I uploaded the source video file to YouTube, and then uploaded the transcript. Using proprietary technology, YouTube synchronized the actual speech with the text, which you can edit online as shown in Figure 5.

Figure 5. Correcting captions in YouTube

From there, you can download an .sbv file from YouTube, which you can convert to the necessary captioning format using one of several online or open source tools. I used the online Captions Format Convert from 3Play Media for my tests. Note that YouTube has multiple suggestions for creating its transcripts, many summarized in a recent ReelSEO.com article. If you’re going to use YouTube for captioning, you should check this out.

Which approach is best for your captioning requirements? Remember that there are multiple qualitative aspects to captioning, and messing up any of them is a very visible faux pas for your deaf and hard-of-hearing viewers. For example, to duplicate the actual audio experience, you have to add descriptive comments about other audio in the file (applause, rock music). With multiple speakers, you may need to position the text in different parts of the video frame so it’s obvious who’s talking, or add names or titles to the text. There are also more basic rules about how to chunk the text for online viewing.

Basically, if you don’t know what you’re doing and need the captioning to reflect well on your organization, you should hire someone to do it for you, at least until you learn the ropes. For infrequent use, transcription and caption formatting is very affordable, though few services publish their prices online. The lowest pricing I found was around $3 per minute, but this will vary greatly with turnaround requirements and volume commitments. Remember, again, that there is a both an accuracy component and a qualitative component to captioning, so the least expensive provider is not always the best.

Captioning Your Streaming Video

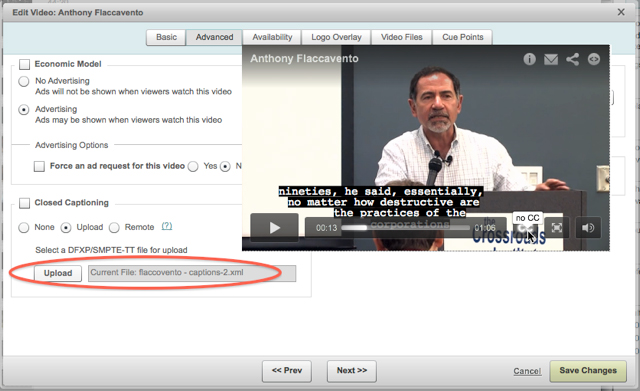

Once you have the caption file, matching it to the streaming video file is done programmatically when creating the player, and all you need is the captioned file in the proper format. For example, Flash uses the World Wide Web Consortium (W3C) Timed Text XML file format (TTML—formerly known as DFXP), which you can add via the FLVPLaybackCaptioning component. Brightcove, Inc., one of the OVPs used by streaming media, can accept either DFXP or the aforementioned SMPTE-TT format, for presenting captions (Figure 6). Most other high-end OVPs, as well as open source players such as LongTail Video’s JW Player and Zencoder, Inc.’s Video.js, also support captions with extensive documentation on their respective sites.

Figure 6. Captioning in Brightcove: The player is superimposed over the video properties control where you upload the caption file.

In a way that seems almost uniquely native to the streaming media market, the various players have evolved away from a unified standard, adding confusion and complexity to a function that broadcast markets neatly standardized years ago. Examples abound. Windows Media captions need to be in the SAMI format, for Synchronized Accessible Media Interchange, while Smooth Streaming uses TTML.

As mentioned, iOS devices can decode embedded captions in the transport stream, eliminating the need for a separate caption file. With HTTP Live Streaming, however, Apple is moving toward the WebVTT spec proposed by the Web Hypertext Application Technology Working Group as the standard for HTML5 video closed captioning. Speaking of HTML5, it has two competing standards, TTML and WebVTT, though browser adaption for either standard is nascent. This lack of captioning is yet another reason that large three-letter networks, which are forced to caption, can’t use HTML5 as their primary player on the desktop.

For a very useful description of the origin of most caption-related formats and standards, check out "The Zencoder Guide to Closed Captioning for Web, Mobile, and Connected TV."

Trans-Platform Captioning

What about producers who publish video on multiple platforms, say Flash for desktops and HLS for mobile? We’re just starting to see transmuxing support in streaming server products that can input captions for one platform, such as HLS, and convert the captions for use in another platform, such as Flash.

For example, I spoke with Jeff Dicker from Denver-based RealEyes Media, a digital agency specializing in Flash-based rich internet applications. He reported that Adobe Media Server 5.0.1 can input captioned streams targeted for either HLS or RTMP and transmux them for deployment for any target supported by the server. For HLS, this means captions embedded in the MPEG-2 transport stream; for delivery to Flash, this means breaking out and delivering a separate caption file with the audio/video chunks.

At press time, Wowza announced Wowza Media Server 3.5, which has the ability to accept “caption data from a variety of in-stream and file-based sources before converting captions into the appropriate formats for live and on-demand video streaming using the Apple HLS, Adobe HDS and RTMP protocols.” So once you have your captions created and formatted for one platform, these and similar products will automatically convert them as needed for the other target platforms supported by the servers. (We'll have an article on using Wowza Media Server for closed captioning early next week.)

[This article will appear in the December 2012/January 2013 issue of Streaming Media magazine.]

Related Articles

The introduction of caption transmuxing simplifies deployment by minimizing the number of source files required, though the process is slightly different for live vs. on-demand

27 Nov 2012

Companies and Suppliers Mentioned